I am thrilled to announce that as of today, 3,630 whole CT scans from the RAD-ChestCT dataset are publicly available on Zenodo, along with abnormality and location labels! You can access the dataset here. After a multi-year saga of bureaucracy and technical barriers, the data is finally up and you can use it for your own projects. In this post I’ll provide an overview of the RAD-ChestCT dataset, why it’s cool, and how you can use it.

Report-Annotated Duke Chest CT (RAD-ChestCT)

The full RAD-ChestCT dataset, as originally reported in this paper, contains 36,316 whole chest CT volumes from 19,993 unique patients. In 2021, it was the largest volumetric medical imaging dataset in the world by number of unique patients.

Every volume in the dataset is associated with a binary label matrix of 84 abnormalities and 52 locations, meaning for each abnormality an associated location is provided. This is often helpful for generic abnormalities like “mass” which could occur in several different organs (lung, liver, kidney, etc.) RAD-ChestCT can thus be used for multilabel prediction of abnormalities in chest CTs.

During the process of preparing the the RAD-ChestCT dataset for sharing, we removed all scans for patients who were (a) under 18 or (b) did not have an age reported in the DICOM header. That left 35,747 CT volumes from adults.

As of July 2022, we’ve shared about 10% of the dataset publicly (3,630 volumes, from adults only).

Our goal is to eventually share the entire dataset of 35,747 volumes. Since manual inspection of every slice of every CT volume is required, to ensure no uniquely identifying features are present, sharing the entire dataset will take a little more time. The full RAD-ChestCT dataset is currently only available within Duke’s Protected Analytics Computing Environment (PACE).

Files Available Now on Zenodo

There are 3,630 image files and 7 metadata/label files available in the Zenodo repository (https://zenodo.org/record/6406114#.YtlqUXbMLAQ).

CT Volume Files (3,630): Each CT scan is provided as a compressed 3D numpy array (npz format). This is convenient if you like to use Python for machine learning (e.g. PyTorch or TensorFlow) because it means you can load a clean 3D numpy array directly, without having to deal with DICOM, the native format of medical images. The CT scans can be read using numpy version 1.14.5 and above. Preprocessing the CT scans from DICOM files to clean 3D numpy arrays required numerous steps, which are detailed further in this post. The end-to-end Python pipeline that I developed to transform the CTs from DICOM to numpy is available here, in case you’d like to use it to create your own dataset. A PyTorch data loader for the RAD-ChestCT dataset which includes data augmentation steps like flips and rotations is available in this repository.

Metadata Files (4):

- CT_Scan_Metadata_Complete_35747.csv: includes metadata about the whole (adult) dataset, even for volumes that haven’t been shared yet. The metadata is derived from the DICOM headers and includes, for example, scan dimensions before and after preprocessing.

- Extrema_5747.csv: includes coordinates for lung bounding boxes for the whole dataset. Coordinates were derived computationally using a morphological image processing lung segmentation pipeline described in this paper.

- Indications_35747.csv: includes scan indications for the whole dataset. Indications were extracted from the free-text reports. An “indication” is the medical reason why a patient got the scan – e.g., follow up of lung cancer, assessing a nodule seen on a chest x-ray, or monitoring of interstitial lung disease.

- Summary_3630.csv: includes a listing of the 3,630 scans that are included in the repository.

Label Files (3): The label files contain abnormality x location labels for the 3,630 shared CT volumes. Each CT volume is annotated with a matrix of 84 abnormality labels x 52 location labels (sometimes reported as 83 abnormalities x 51 locations depending on whether you count “other abnormality” and “other location”). Labels were extracted from the free text reports using the Sentence Analysis for Radiology Label Extraction (SARLE) framework. For each CT scan, the label matrix was flattened and the abnormalities and locations separated by an asterisk in the CSV column header (e.g. “mass*liver”). The labels can be used as the ground truth when training computer vision classifiers on the CT volumes. Label files include: imgtrain_Abnormality_and_Location_Labels.csv (for the training set), imgvalid_Abnormality_and_Location_Labels.csv (for the validation set), and imgtest_Abnormality_and_Location_Labels.csv (for the test set). This repository contains the Python implementation of the SARLE label extraction framework used to generate the abnormality and location label matrix from the free text reports. SARLE has minimal dependencies and the abnormality and location vocabulary terms can be easily modified to adapt SARLE to different radiologic modalities, abnormalities, and anatomical locations.

Accessing the RAD-ChestCT Dataset

Due to the sensitive nature of the data, RAD-ChestCT is “Restricted” on Zenodo, which means that you have to request permission to download the data. Once permission is granted, you can download the files directly from a web browser.

Due to technical limitations, we were unable to upload the files in larger zipped archives, so each file must be individually downloaded. The zenodo-get Python package and zen4R package look like promising ways to programmatically download files in bulk from Zenodo, but I have not tried either one myself. (Developing the dataset took several years of work, so the pain and suffering of clicking a lot of individual download buttons for a couple hours is hopefully worth it if you’re interested in the dataset.)

Convolutional Neural Networks for Multiple Abnormality Prediction from CT Scans

RAD-ChestCT can be used to train machine learning models. The GitHub repository https://github.com/rachellea/ct-net-models contains PyTorch code to train convolutional neural network models for multiple abnormality prediction from CT volumes. If you want to build your own models for multiple abnormality prediction from CTs, I recommend starting from that repository, as it already contains a RAD-ChestCT PyTorch data loader, several abnormality prediction models including CT-Net, and a module for training and evaluating performance of different models.

For a conceptual overview of multiple abnormality prediction from CTs, you can check out this post and this post.

The 3,630 volumes currently available are more than enough to train different models and see decent performance on multiple abnormality prediction tasks. Training on the full dataset of 35k volumes does yield higher performance, but it’s also slow since CT scans are big: just one CT scan is about the size of the entire PASCAL VOC 2012 dataset, the full 35k CTs take up about 3 terabytes of disk space, and training and evaluating a model on the whole 35k dataset can take about 2 weeks on 2 GPUs. So, for most of my experiments during my PhD, I used a small subset of 3,000 scans anyway in order to have faster training time (i.e., 5-10 hours per model instead of 2 weeks).

Papers

If you find RAD-ChestCT useful in your research, please consider citing our paper that describes the development of RAD-ChestCT:

If you’re interested in further reading, I also recommend this paper, which proposes an explainable model called AxialNet as well as a mask loss that leverages abnormality location information to encourage the model to only predict abnormalities from the organs in which they are found:

- The RAD-ChestCT dataset is a large medical imaging dataset that includes 35,747 whole CT volumes;

- Each CT volume is annotated with 84 abnormalities x 52 locations;

- 3,630 CT volumes and their labels are available on Zenodo as of July 2022.

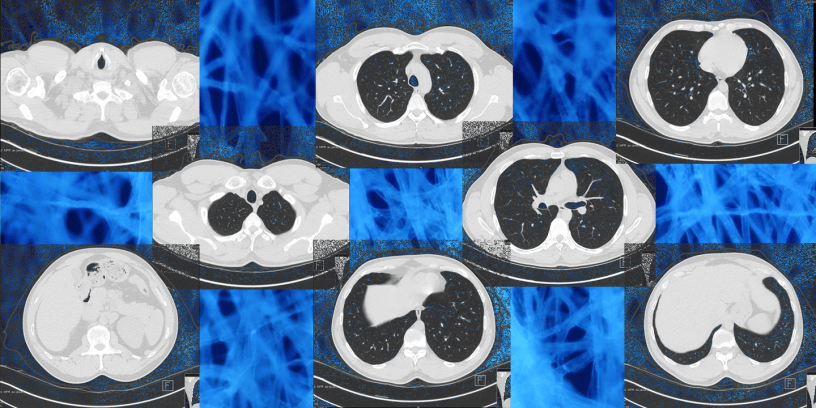

About the Featured Image

The featured image consists of CT slices from Wikipedia (Creative Commons license) and an image of paper fluorescing from Wikipedia (CC BY-SA 3.0).

Want to be the first to hear about my articles bridging healthcare, artificial intelligence, and business—and get a free list of my favorite health AI resources? Sign up here.

Comments are closed.