Convolutional neural networks, or CNNs for short, form the backbone of many modern computer vision systems. This post will describe the origins of CNNs, starting from biological experiments of the 1950s.

Simple and Complex Cells

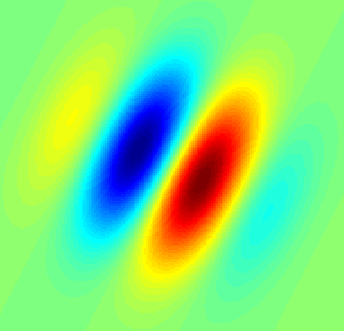

In 1959, David Hubel and Torsten Wiesel described “simple cells” and “complex cells” in the human visual cortex. They proposed that both kinds of cells are used in pattern recognition. A “simple cell” responds to edges and bars of particular orientations, such as this image:

A “complex cell” also responds to edges and bars of particular orientations, but it is different from a simple cell in that these edges and bars can be shifted around the scene and the cell will still respond. For instance, a simple cell may respond only to a horizontal bar at the bottom of an image, while a complex cell might respond to horizontal bars at the bottom, middle, or top of an image. This property of complex cells is termed “spatial invariance.”

Figure 1 in this paper diagrams the difference between simple and complex cells.

Hubel and Wiesel proposed in 1962 that complex cells achieve spatial invariance by “summing” the output of several simple cells that all prefer the same orientation (e.g. horizontal bars) but different receptive fields (e.g. bottom, middle, or top of an image). By collecting information from a bunch of simple cell minions, the complex cells can respond to horizontal bars that occur anywhere.

This concept – that simple detectors can be “summed” to create more complex detectors – is found throughout the human visual system, and is also the fundamental basis of convolution neural network models.

(Side note: when this concept is taken to an extreme, you get the “grandmother cell“: the notion that somewhere in your brain there is a single neuron which responds exclusively to the sight of your grandmother.)

The Neocognitron

In the 1980s, Dr. Kunihiko Fukushima was inspired by Hubel and Wiesel’s work on simple and complex cells, and proposed the “neocognitron” model (original paper: “Neocognitron: A Self-organizing Neural Network Model for a Mechanism of Pattern Recognition Unaffected by Shift in Position”). The neocognitron model includes components termed “S-cells” and “C-cells.” These are not biological cells, but rather mathematical operations. The “S-cells” sit in the first layer of the model, and are connected to “C-cells” which sit in the second layer of the model. The overall idea is to capture the “simple-to-complex” concept and turn it into a computational model for visual pattern recognition.

Convolutional Neural Networks for Handwriting Recognition

The first work on modern convolutional neural networks (CNNs) occurred in the 1990s, inspired by the neocognitron. Yann LeCun et al., in their paper “Gradient-Based Learning Applied to Document Recognition” (now cited 17,588 times) demonstrated that a CNN model which aggregates simpler features into progressively more complicated features can be successfully used for handwritten character recognition.

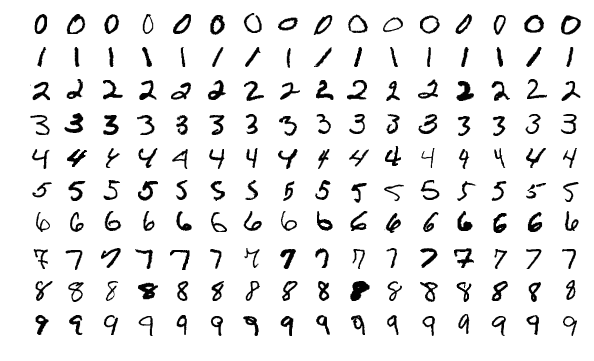

Specifically, LeCun et al. trained a CNN using the MNIST database of handwritten digits (MNIST pronounced “EM-nisst”). MNIST is a now-famous data set that includes images of handwritten digits paired with their true label of 0, 1, 2, 3, 4, 5, 6, 7, 8, or 9. A CNN model is trained on MNIST by giving it an example image, asking it to predict what digit is shown in the image, and then updating the model’s settings based on whether it predicted the digit identity correctly or not. State-of-the-art CNN models can today achieve near-perfect accuracy on MNIST digit classification.

Example handwritten digits from the MNIST data set.

One direct consequence of this work is that your mail is now sorted by machines, using automated handwriting recognition techniques to read the address.

Convolutional Neural Networks to See Everything

Throughout the 1990s and early 2000s, researchers carried out further work on the CNN model. Around 2012 CNNs enjoyed a huge surge in popularity (which continues today) after a CNN called AlexNet achieved state-of-the-art performance labeling pictures in the ImageNet challenge. Alex Krizhevsky et al. published the paper “ImageNet Classification with Deep Convolutional Neural Networks” describing the winning AlexNet model; this paper has since been cited 38,007 times.

Similar to MNIST, ImageNet is a public, freely-available data set of images and their corresponding true labels. Instead of focusing on handwritten digits labeled 0 – 9, ImageNet focuses on “natural images,” or pictures of the world, labeled with a variety of descriptors including “amphibian,” “furniture,” and “person.” The labels were acquired through massive human effort (i.e. manual labeling – asking someone to write down “what is this a picture of” for each image). ImageNet currently includes 14,197,122 images.

Example images from the ImageNet dataset.

Throughout the past several years, CNNs have achieved excellent performance describing natural images (including ImageNet, CIFAR-10, CIFAR-100, and VisualGenome), performing facial recognition (including CelebA), and analyzing medical images (including chest x-rays, photos of skin lesions, and histopathology slides). This website, “CV Datasets on the web” has an extensive list of more than fifty labeled image data sets that researchers can use to train and evaluate CNNs and other kinds of computer vision models. Companies are developing many exciting applications, including Seeing AI, a smartphone app that can verbally describe surroundings to blind people.

CNNs and Human Vision?

The popular press often talks about how neural network models are “directly inspired by the human brain.” In some sense, this is true, as both CNNs and the human visual system follow a “simple-to-complex” hierarchical structure. However, the actual implementation is totally different; brains are built using cells, and neural networks are built using mathematical operations.

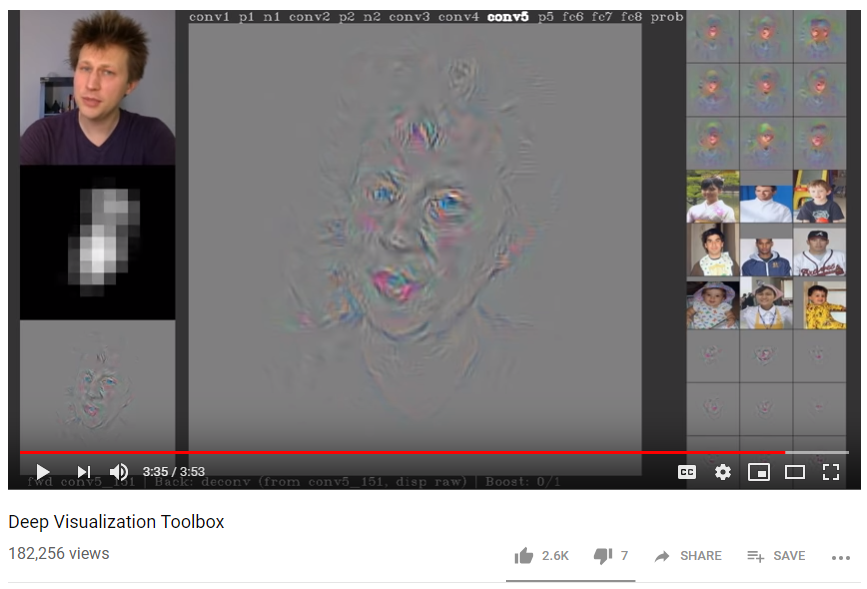

This video, “Deep Visualization Toolbox” by Jason Yosinski, is definitely worth watching for a better understanding of how CNNs take simple features and use them to detect complex features like faces or books.

Conclusion

Computer vision has come a long way in the past few decades. It is exciting to imagine what new developments will transform the field in the future, and boost technologies like automated radiology image interpretation and self-driving cars.

About the Featured Image

The featured image shows a western meadowlark. There are various bird data sets available to train CNNs to automatically recognize bird species, including the Caltech-UCSD Birds 200 data set which includes 6,033 images showing 200 bird species. In a similar vein, iNaturalist is building a crowd-sourced automated species identification system that includes birds and many other species. Such systems may someday be of great use in conservation biology.

Want to be the first to hear about my articles bridging healthcare, artificial intelligence, and business—and get a free list of my favorite health AI resources? Sign up here.

Comments are closed.